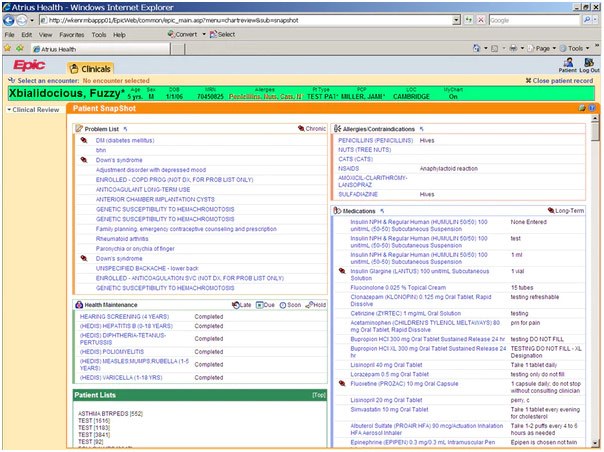

Physicians generally “chart” down information or, in other words, ensure that their patient’s medical history, diagnosis, medication, etc. gets noted down. Not too long ago, this was all done with paper, but with the computer revolution, there was a clumsy transition to digitize the process with electronic medical records (EMRs). The hope was that this information would be more securely stored and could be easily transferred between doctors to make the entire healthcare experience more beneficial for everyone. However, EMRs have become a cumbersome mess. Many tech companies hope to use artificial intelligence (AI) to look through EMRs, operating under the assumption that the information will be amenable to analysis. They believe EMRs largely reflect the truth, so they can navigate through it, having the AI model around any potential issues. To put it plainly though, this view does not hold water.

Sample Patient Record from Epic’s Electronic Medical Records

First and foremost, AI will be dealing with a derivation of the data, not the raw data itself. The data starts in the chart but then gets transformed into administrative data. From there, coders convert the data into numeric code, but these coders generally do not have the medical training to question or critically evaluate what a medical specialist has done. Therefore, these coders are constrained to merely translate whatever is written, not being able to fill in the gaps that physicians may leave. For example, a physician may chart A and C, leaving out B because anyone with medical training would know B is part of that process. Physicians are just not thinking of charting in a way that facilitates translation by coders, largely because that is not the primary purpose of charting.

Artist Depiction of the Electronic Medical Records Problem

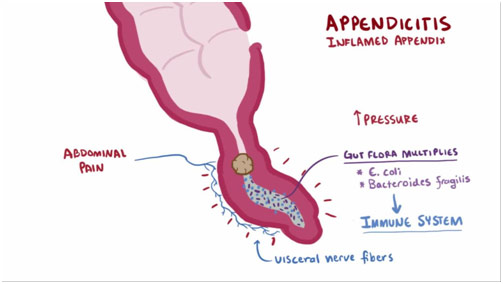

Furthermore, the charts do not necessarily depict what the doctor is thinking, so the information is not really a faithful representation of reality. For example, coming to diagnosis is a process, and not all steps of the process may be documented. Take a patient who is admitted to the emergency room (ER) with belly pain. The ER physician documents that it could be the flu, a perforated ulcer, or a ruptured ectopic pregnancy, but, with other diagnostic information, they decide to presume appendicitis and chart that down. They might not chart the other potential diagnoses, one of which may turn out to be the correct one. In another example, imagine a patient with multiple mental health issues. Not only may the physician leave something out because of the exhaustive list of co-occurring disorders but also the physician may incorrectly deem certain personal details irrelevant and not document them.

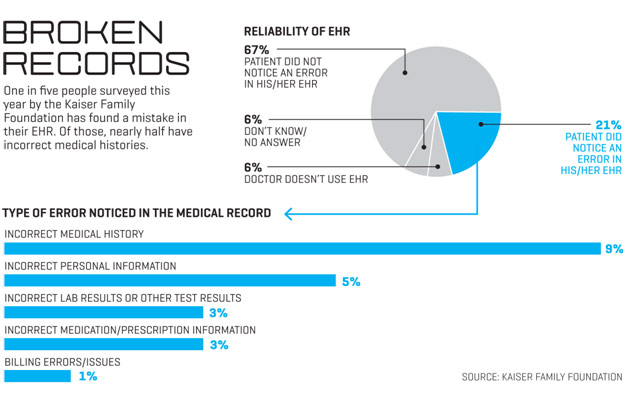

Similarly, take a real-life example of an intubated patient in the ICU. The patient’s progress does not change much day-to-day, so the physician simply copy/pastes the ICU notes. Documentation is such a huge burden that workflow shortcuts like this are required. However, when the patient is discharged from the ICU, the EMR wrongly maintains that the patient is still intubated because the physician forgot to update the ICU notes with the patient’s extubation. With physicians attempting to streamline the charting process because it has become so burdensome, the question is not what in the charts is inaccurate but what in the charts is actually accurate.

Brief Appendicitis Description

With definitions that evolve over time, the quality of the documented data is further degraded. For instance, breast cancer classifications have changed enormously over time, and not all physicians stay up to date with the latest developments. A short while ago, staging for breast cancer was based on the TNM criteria, or tumor size, lymph node status, and metastasis. Now, staging has taken genetics and other less quantifiable factors into account, making classifying breast cancer significantly more complex and easier to botch. Consequently, classification systems get misapplied and misunderstood. The result is inconsistent documentation.

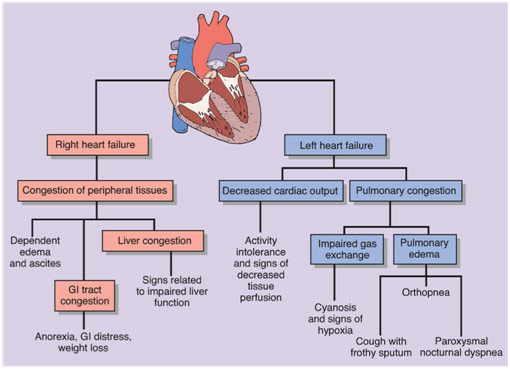

Perhaps even more remarkably, with increasing specialization, the universe of each specialist’s expertise has become progressively smaller. For instance, a patient may tell his rheumatologist that he has congestive heart failure, which the rheumatologist then conscientiously documents. However, the rheumatologist does not dive into the deeper classifications, not specifying if the patient has left-sided heart failure, right-sided heart failure, systolic heart failure, or diastolic heart failure. Each of these different classifications has different implications, making the specification quite vital to note. To add insult to injury, the rheumatologist may not order an echocardiogram to confirm the patient has congestive heart failure in the first place, leaving the veracity of this patient-reported condition unclear.

Varying Implications of Just Two Types of Heart Failure

Even if the correct information is charted, EMRs are so tortuous that it would be quite difficult for coders to sift through and find what is actually true. A large part of why EMRs are so bloated is because we have put the cart before the horse. In other words, when hospitals suddenly switched over to electronic records, business development outpaced clinical development: it is more difficult to account for all the complexities of medicine than it is to account for all the complexities of billing. Thus, EMRs became optimized for business functions such as Medicare compliance, and, as a result, documenting became a frustrating box for physicians to mindlessly check off.

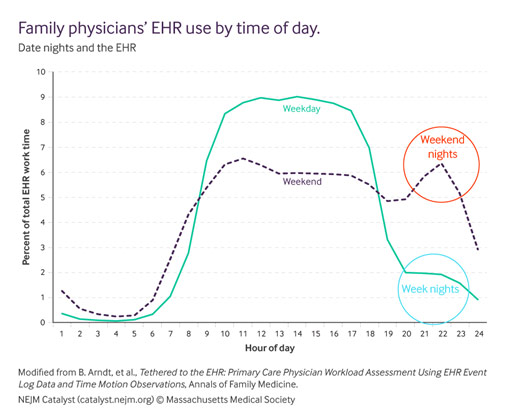

In what is known as “Pajama Time,” physicians are routinely forced to go home and stay up late to finish documentation instead of relaxing or spending time with their families. The issue here is that, like everyone, physicians need to be able to recuperate in their homes without work invading their peace. Only then can physicians come back energized and ready to properly care for their patients. When a physician’s time at home is instead spent documenting for the main purpose of billing, we start seeing higher rates of burnout. Indeed, documentation is so burdensome that the transformation of physicians into “data-entry clerks” is one of the prime reasons for physician burnout. EMRs no longer serve their stated purpose; they are awful communication tools with variable value to front-line providers because of their penchant for errors.

Time Spent by Family Medicine Practitioners on Electronic Health Records Over the Day

If we want EMRs to be amenable to AI analysis so that we may come up with new discoveries, we need to get rid of this “garbage in, garbage out” problem and increase the quality of our input data. Thankfully, there is much work going on towards developing a solution for this issue, putting medicine, not business, back in the lead of EMRs. The hope is to make a more user-friendly way of documenting and to create an infrastructure that gives physicians digital services that actually help them provide care. Only when EMRs become an easy-to-use and functional tool for physicians will they begin serving their stated purpose to help transform healthcare for the better.

You put forward some logical statements.